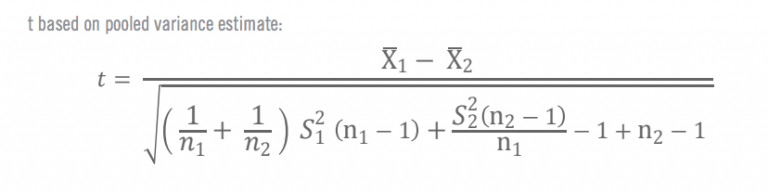

It is known that under the null hypothesis, we can calculate a t-statistic that will follow a t-distribution with n1 + n2 – 2 degrees of freedom. The null hypothesis is that the two means are equal, and the alternative is that they are not equal. The assumption for the test is that both groups are sampled from normal distributions with equal variances. So, we use it to determine whether the means of two groups are equal to each other. T-tests in R is one of the most common tests in statistics. Also, we will look at various types of T-test in R like one sample and Welch T-test etc. Along with this, we will learn how to perform T-tests in R and its various uses.

In this tutorial, we are going to learn what is T-tests in R. pvalfunc target)) }ī offer you a brighter future with FREE online courses Start Now!! Lastly I use the bootstrapped differences to calculate an approximate p-value for the test of zero difference in means. Then I bootstrap rows of the matrix and calculate the observed difference in means between the groups. I construct a two column matrix with the first column being an indication of group membership and the second column the observed values for the two groups stacked underneath each other. Of course, if your group sizes are fixed in advance then do condition on those, my answer is for the case where they are not. I would condition on the total sample size, not fix the group sizes. (However, you should re-do your bootstrap using the correct $n_j$s before you go with this result.) In other words, your result is highly significant. The implication of that is that the probability ($p$-value) of getting a $t$-statistic as far or further from $0$ from your bootstrapped null sampling distribution is $< (1/10000) / 2$. Your observed $t$-statistic is so extreme that none of the bootstrapped $t$s overlapped with it.

You bootstrapped a null sampling distribution for your $t$-statistic. In your case, think about the logic of the type of bootstrap you used. Since you are bootstrapping the test statistic, you are somewhat safe from that. In the linked post, I bootstrapped an $F$-statistic, but the way the $F$-test works is somewhat different from how a $t$-test works. That is essentially what you are doing in your code.Īlso, because of the ways tests can differ, the bootstrapping strategy may need to be customized to the test you want to perform. In the linked post, I bootstrapped the null distribution of the test statistic. You can bootstrap simply the mean difference, if you want to. You need to make sure you understand which kind of thing you're doing. Next, recognize that there are several ways to bootstrap: e.g., you can bootstrap your data directly or bootstrap a test statistic, you can bootstrap your sampling distribution or a null distribution, etc. Is it possible that using original samples the difference between means is "so significant" (p-value = 4.066e-08), but the bootstrap samples shows that actually it's not (0.5042) ?Īs notes, your bootsamples should have the same $n_j$s as your original data. I corrected my code, but results are still awkward to me: t.est t.vect) I thought that it can be directly taken from ANOVA case to Student's t test. The idea behind subtracting means was here - gung's reply. How to choose bootstrap sample size? In other words, is 200-elements from 377- and 306-element groups OK? Should they be 377 and 306, respectively, as this post recommends?.Why not bootstrapping simply differences between women and men?.I'm not sure if my code or/and my thinking is correct, 'cause in the end I got 0 t-statistics greater than t-statistic from original data. I have two groups (women and men), unequal sizes and I do not assume equal variances. I'd like to bootstrap a two sample t-test.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed